Evaluation

Fine-tuning a model is a critical phase of the development lifecycle; however, the efficacy of the process is determined by whether the weight adjustments align with target performance objectives. This technical reference establishes a standard operating procedure for evaluating model performance across various architectures and dataset configurations to ensure adherence to performance, safety, and domain-specific requirements. By mapping specific architectural choices to dedicated evaluation suites, this framework ensures consistency and reproducibility across all experimental runs.

The following table summarizes the metrics utilized for each purpose, model architecture and their primary functional purpose.

| Purpose | Architecture | Primary Metrics | Functional Focus |

|---|---|---|---|

| Similarity / Embedding | Bi-Encoder | val_loss, spearman, pearson, kendall_tau, mse, mae | Rank Correlation: Measures alignment between predicted cosine similarity and ground-truth scores. |

| Reranking | Cross-Encoder | val_loss, roc_auc, accuracy, f1, average_precision | Relevance Ranking: Evaluates the capability to distinguish between relevant and irrelevant query-document pairs. |

| Multi-Label Classification | Single Encoder | val_loss, lrap, f1_macro, f1_micro, jaccard | Label Ranking: Assesses the accuracy of predicting multiple non-exclusive tags per input. |

| Multi-Class Classification | Single Encoder | val_loss, accuracy, f1_macro, f1_weighted | Categorical Accuracy: Measures performance in mutually exclusive classification tasks across balanced or imbalanced classes. |

| Chat / Conversational | Decoder | val_loss, perplexity, accuracy | Language Modeling: Quantifies text fluency, token prediction accuracy, and distributional uncertainty. |

Metric

Similarity

Evaluation focuses on the relationship between vector space proximity and human-labeled similarity.

- Spearman / Kendall Tau: Non-parametric measures that evaluate the monotonic relationship between variables. These are prioritized over Pearson for ranking tasks as they focus on the relative order of pairs rather than linear distance.

- MSE / MAE: Mean Squared Error and Mean Absolute Error quantify the direct deviation between predicted and target similarity scores.

Reranking

Evaluation treats the task as a binary or scoring classification of pairs.

- ROC-AUC: Indicates the probability that the model will rank a randomly chosen positive instance higher than a randomly chosen negative one.

- Average Precision: Summarizes the precision-recall curve, providing a single score that represents the quality of the ranked results.

Multi-Label Classification

Designed for tasks where multiple labels apply simultaneously to a single input.

- LRAP (Label Ranking Average Precision): Evaluates the model's ability to rank all relevant labels higher than irrelevant ones.

- Jaccard Score: Measures the intersection over union of the predicted and ground-truth label sets; a strict metric for exact set matching.

- F1-Micro vs. Macro: Micro averages global counts (favors frequent labels), while Macro averages per-label results (favors rare labels).

Multi-Class Classification

Designed for mutually exclusive classification.

- F1-Weighted: Calculates metrics for each label and finds their average, weighted by support (the number of true instances for each label). This accounts for label imbalance.

- Accuracy: The ratio of correct predictions to total observations; used as a baseline for balanced datasets.

Chat

Evaluation centers on probability distributions across the vocabulary.

- Perplexity: A measure of how well the probability distribution predicts the sample. A lower perplexity indicates the model is more confident and fluent.

- Accuracy: In this context, refers to the frequency of predicting the correct next token during validation (Next-Token Prediction).

Text Generation

We are working on implementation of this evaluation metric.

Compare Metrics Within a Single Run

Protean AI provides a comprehensive view of metrics across all training epochs. Evaluation take time to complete, hence it is recommended to not perform the evaluation on every optimization step.

To reduce the evaluation time, it is recommended to run evaluation every 10% of the total number of steps in the epoch. This will give a good insight into the trends and stability of the model.

The x axis represents the evaluation steps and the y axis represents the metric value. A tooltip displays the epoch and step number of a training process when the evaluation is performed.

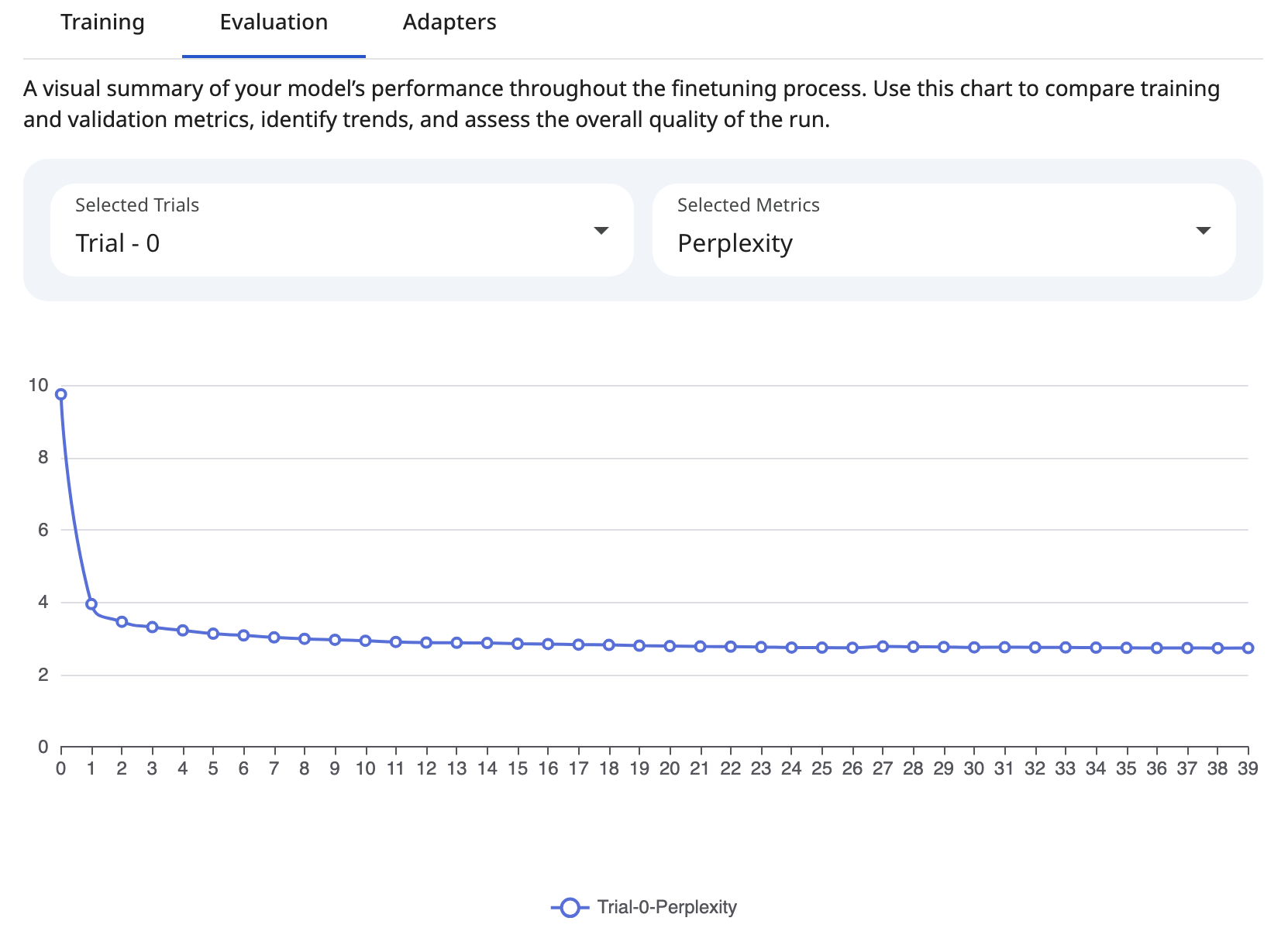

Users can compare metrics across epochs to identify trends and the impact of hyperparameter adjustments. The following charts illustrate the comparison of metrics across epochs in a single trial.

Snapshot of Protean AI Platform

Snapshot of Protean AI PlatformCompare Metrics Across Trials

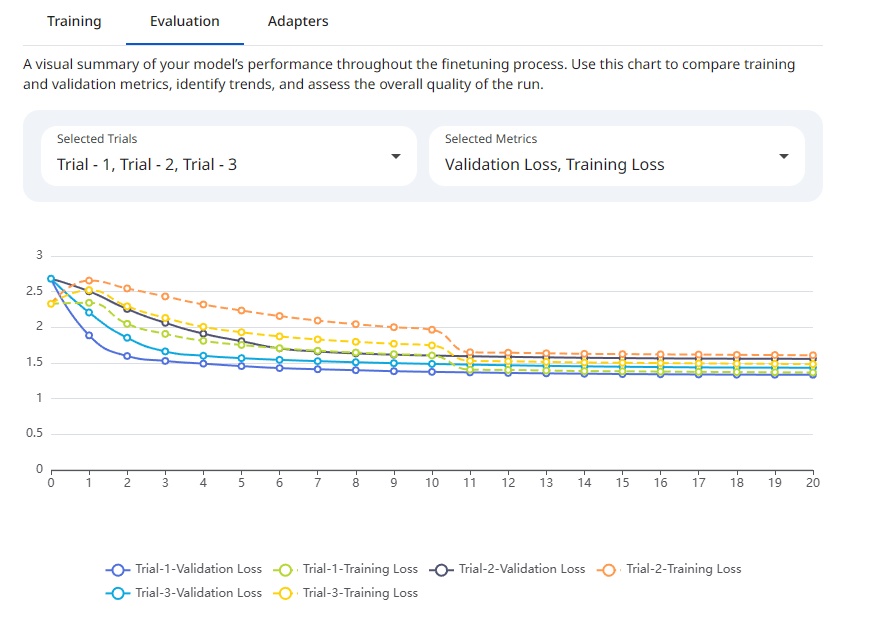

Evaluating a single fine-tuning run in isolation provides limited insight. To optimize performance, practitioners must compare metrics across multiple trials (experiments) to identify trends, stability, and the impact of hyperparameter adjustments. Protean AI enables a comprehensive comparison of metrics across multiple trials to help identify the best performing model. Select the desired trial and metrics from the dropdown menu. The following chart illustrates the comparison of metrics across multiple trials. Users can choose trials for which to compare metrics.

Snapshot of Protean AI Platform

Snapshot of Protean AI PlatformModel Acceptance Criteria (Thresholds)

We are working on this to provide a more detailed guideline.

Workflow

- Loss Analysis: Verify that

val_lossis decreasing and converging. Sudden spikes may indicate catastrophic forgetting or learning rate instability. - Correlation Check (Bi-Encoders): Prioritize Spearman over Pearson to ensure that the ranking of retrieved items remains consistent even if absolute similarity scores shift.

- Imbalance Verification (Classification): In cases of class imbalance, prioritize F1-Macro (Multi-Class) or LRAP (Multi-Label) over raw Accuracy to ensure minority classes are not being ignored.

- Generative Stability: For Decoders, monitor the relationship between

val_lossandperplexity. Ifval_lossdecreases whileaccuracyplateaus, investigate potential overfitting on specific formatting patterns.