Configuration

This guide details the configurable settings ("knobs and dials") available when fine-tuning a Large Language Model (LLM) using Protean AI. Fine-tuning is analogous to training a new employee: decisions must be made regarding the volume of learning, the pace of instruction, and the specific skills to prioritize.

General Settings

These settings control the behavior of the fine-tuning process.

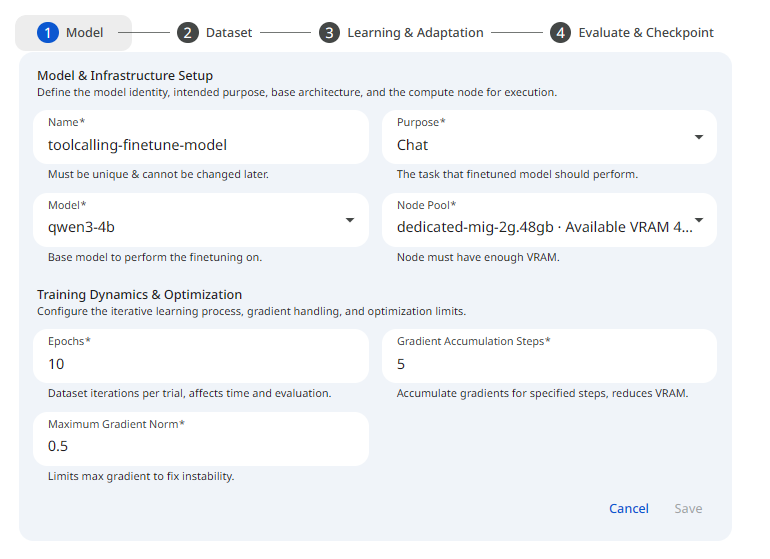

See the screenshot below for an example of Finetune General configuration.

Snapshot of Protean AI Platform

Snapshot of Protean AI PlatformName

The model name is a unique identifier for the model. It is used to identify the model in the registry. It must be unique across all models in the registry. It can contain alphanumeric characters.

Purpose

The purpose of the model is a description of the task it is intended to perform. The following table summarizes the purposes that Protean AI supports.

| Purpose | Use Case |

|---|---|

| Chat | Conversational Agent, Tool Calling, Thinking |

| Text Generation | Code Completion, Sentence Completion (Used in email clients or writing tools) |

| Similarity | Similarity Search, RAG embeddings |

| Reranking | Search result reranking, retrieval refinement, relevance scoring, RAG ranking |

| Multi-label Classification | Tagging, topic assignment, content moderation, intent detection |

| Multi-class Classification | Intent classification, document categorization, sentiment analysis |

Model

The model field specifies the model to be fine-tuned. Only registered models can be fine-tuned. See Model Registry for information on how to register a model. See Model Selection for more information on how to select a model for fine-tuning.

Epochs

An epoch is one complete pass through the entire training dataset, allowing the model to see and learn from every single data sample once, updating its internal parameters based on the errors. Think of it like reading a textbook once for studying; one epoch means reading the whole book, while multiple epochs are like reading it several times to master the material. The number of epochs specifies the number of times the model will cycle through the entire dataset.

Multiple epochs are usually needed for the model to iteratively improve; however, too many can lead to overfitting. See Balancing Performance for more information. For large datasets, 1–3 epochs are usually enough. Monitor validation loss to stop training before the model begins to overfit (memorize) the data.

Gradients Accumulation Steps

Gradients accumulation is a method to simulate a larger batch size without increasing memory usage. See Gradient Accumulation for more information. The number of gradient accumulation steps specifies the number of mini-batches to accumulate before performing a parameter update. For example,

- If

Batch Sizeis 2 andGradient Accumulationis 8, the model processes 16 examples at a time. - If

Batch Sizeis 4 andGradient Accumulationis 4, the model processes 8 examples at a time.

Max Gradient Norm

The maximum gradient norm is a threshold limit for training updates. A technique called "Gradient Clipping" that caps the maximum value of gradients to a specific threshold. Usually set to 1.0. This acts as a safety valve to prevent "exploding gradients," ensuring that a single "bad" data point doesn't corrupt the entire model during an update. See Gradient Clipping for more information.

Node Pool

The node pool field specifies the compute resources to be used for training. See Node Pools for more information.

Dataset Settings

These settings control the training data, evaluation data etc.

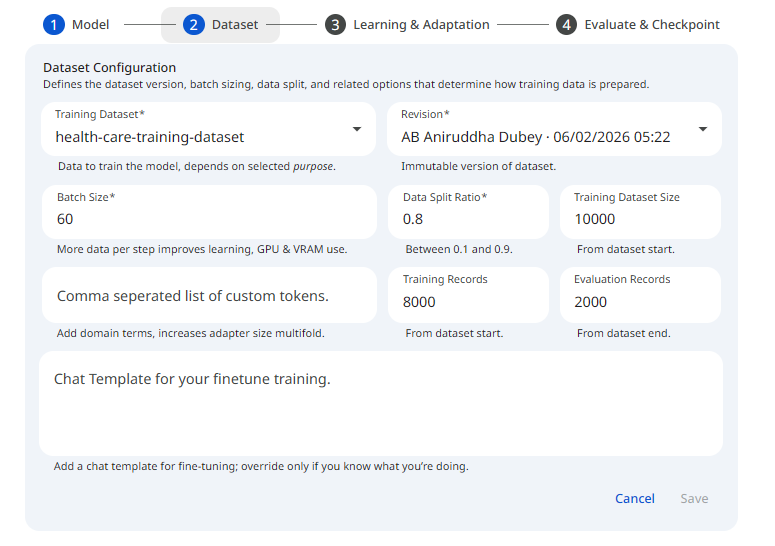

See the screenshot below for an example of Finetune Dataset configuration.

Snapshot of Protean AI Platform

Snapshot of Protean AI PlatformTraining Dataset

The training dataset is the data used to train the model. 1000 examples or more are recommended. Dataset format is very important for fine-tuning. Using wrong format can lead to incorrect results. See the Dataset for information on the schema and structure of the data and dataset.

Training data must be registered in the dataset registry before it can be used for fine-tuning. See the Training Datasets, on how to register a traning dataset and make it available for fine-tuning.

Revision

The revision field specifies the revision of the training dataset to be used for fine-tuning. See Dataset Revisions for more information.

Batch Size

The batch_size parameter defines how many training samples, from training dataset, are processed together.

Data Split Ratio

The data_split_ratio parameter defines the ratio of training data to evaluation data.

The data split ratio is used to split the training dataset into training and evaluation datasets.

The training data is used to train the model. The evaluation data is used to monitor the model's performance during training.

Example: data_split_ratio = 0.8 means that first 80% of the training dataset is used for training, and the last 20% is used for evaluation.

Custom Tokens

The custom_tokens parameter defines the list of custom tokens to be added to the tokenizer vocabulary.

Without custom tokens, a model sees a specialized word (like a product ID PX-900 or a specific code function get_auth_token) as a series of fragments: P, X, -, 9, 0, 0.

Impact: By adding it as a custom token, it becomes one single unit. This reduces the total sequence length, allowing you to fit more information into the same context window and speeding up processing.

This is the "under the hood" impact you need to be careful with: Embedding Matrix Expansion: Every time you add a custom token, the model's Embedding Layer and Language Modeling Head (the input and output layers) grow. Protean AI resize the model's token embeddings automatically to accommodate the new vocabulary size. However, this results in increased size of the model adapters as it now has to store 2 additional layers(embeddings and head).

Chat Template

This parameter is only applicable for instruction tuned models, used for chat objective.

A chat template is a Jinja template stored in the tokenizer's chat_template attribute.

It is strongly recommended to use the default chat template. This is done by leaving the chat template parameter empty.

In some cases, where the default chat template does not work, due to a defect etc., you can use a custom chat template.

Thinking (chain-of-thaught), or tool-calling capabilities can be added to an instruction tuned model that does not have those capabilities.

This can be done by adding special tokens to the tokenizer vocabulary using the custom_tokens parameter.

And then using the special tokens in the chat template. In order for this to work, the model must be fine-tuned on a dataset that contains the special tokens.

The dataset size also must be large enough (usually 10s of thousands of high quality data) to allow the model to retain the special tokens.

Hyperparameter Settings

Hyperparameters are external configurations that dictate how the model learns. Choosing the right ones is often called "Hyperparameter Tuning".

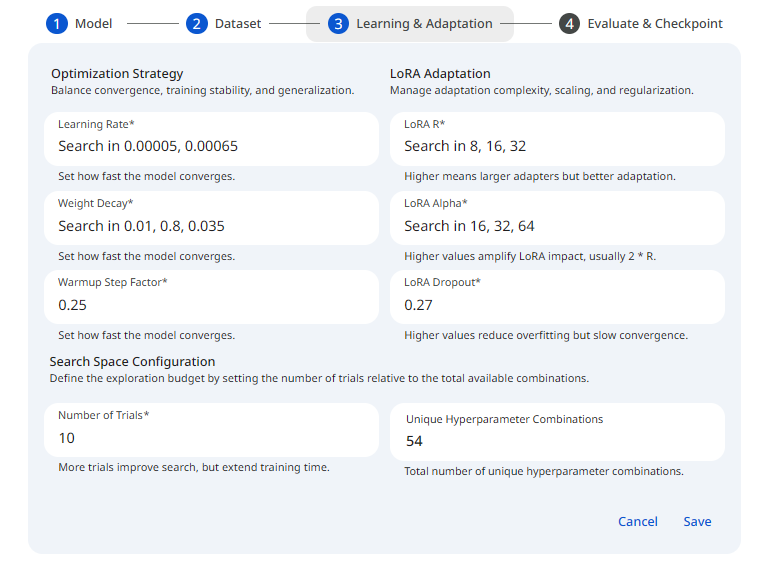

See the screenshot below for an example of Finetune Hyperparameter configuration.

Snapshot of Protean AI Platform

Snapshot of Protean AI PlatformLearning Rate

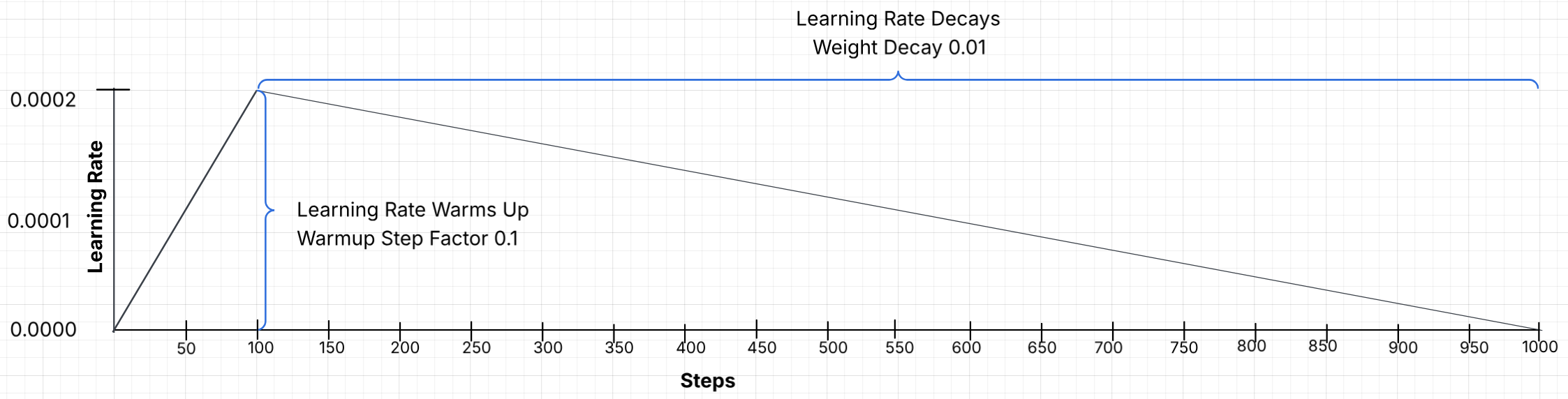

This is arguably the most important hyperparameter. It determines the "size" of the optimizer's movement when updating model weights. The learning rate is used as a multiplier, it is specifically multiplied by the gradient (or slope) of the loss function. In gradient descent, this product is then used to determine the magnitude of the update applied to the weight.

Too High Value: The model processes material too quickly, missing details or causing training failures.

Too Low Value: Learning progresses too slowly, potentially failing to converge within a reasonable time.

- Recommendation: 2e-4 (0.0002) is the standard starting point for QLoRA/LoRA fine-tuning.

Warmup Step Factor

A warmup step factor defines the initial training phase where the learning rate increases gradually from a very low value to the target, maximum learning rate. It stabilizes training by preventing premature divergence caused by high gradients, allowing model weights to adjust to new data.

- Recommendation: A factor of 0.05 (5%) or 0.1 (10%) provides a smooth start.

Weight Decay

Weight decay is a regularization technique that keeps model parameters small to prevent overfitting.

Protean AI uses AdamW optimizer, which incorporates weight decay into the optimizer's update rule.

It gently penalizes large weights by shrinking them by weight_decay at every training step, independent of the gradient updates.

The effective decay applied at each step is actually learning_rate * weight_decay.

- Recommendation: 0.01 is the standard default and rarely requires adjustment.

Snapshot of Protean AI Platform

Snapshot of Protean AI PlatformRank (r)

This is the rank of the low‑rank adaptation matrices and directly controls how many new trainable parameters you add.

Lower r (e.g. 4–8): fewer adapter parameters, lower VRAM and faster, but less expressive updates; good for simple tasks or light domain shifts.

Medium r (e.g. 16–32): common "sweet spot" for instruction‑tuning and many LLM fine‑tunes.

High r (e.g. 64–256): more memory and compute, necessary if the model is being taught a massive amount of new information (such as a new language or a complex technical domain), but increases overfitting risk and diminishing returns.

- Recommendation: Start with 16. If the model fails to retain enough information, increase to 32 or 64.

Alpha

Alpha is the scaling factor that controls how strongly the LoRA update influences the base model's weights.

Lower alpha makes the LoRA update more conservative.

Higher alpha makes the LoRA update more influential.

- Recommendation: A general rule is Alpha = 2 × Rank.

- If Rank is 16, set Alpha to 32.

- If Rank is 64, set Alpha to 128.

Dropout

LoRA fine-tuning, while efficient, can still overfit to small, specialized training datasets. Dropout acts as a regularizer, forcing the model to learn more robust patterns rather than memorizing the training data. Dropout is a regularization technique that randomly discards some of the model's neurons during training to prevent overfitting. When setting lora_dropout (e.g., 0.1), 10% of the neurons in the low-rank adapters are randomly set to zero during each forward and backward pass.

- Recommendation: Start with 0, only increase if overfitting is observed. If underfitting, decrease it.

- 7B–13B Parameters: 10% (0.1) is commonly recommended,

- 33B–65B+ Parameters: 5% (0.05) is recommended.

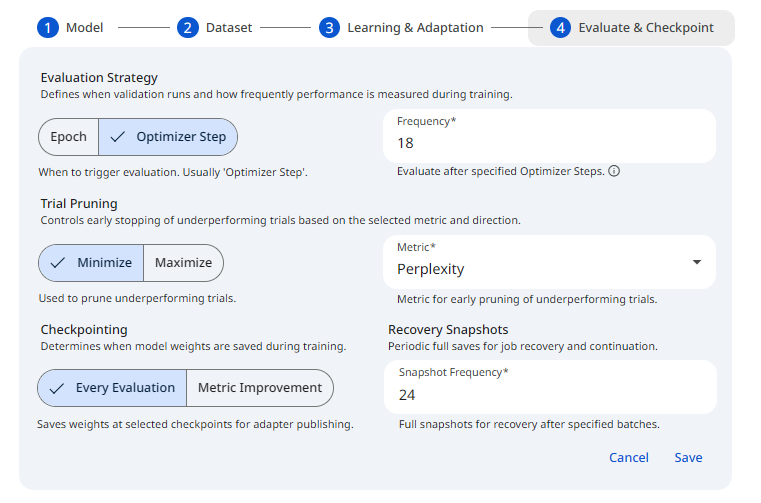

Evaluation & Snapshot Settings

These settings control the evaluation and snapshot process. They are used to monitor the model's performance during training and create snapshots for recovery purposes. Evaluation results can be used to determine when to stop training. Snapshots are used to recover from a crashed training session.

See the screenshot below for an example of Finetune Evaluation configuration.

Snapshot of Protean AI Platform

Snapshot of Protean AI PlatformEvaluation Level

Determines the unit of measurement for triggering an evaluation. Options include Epoch (one full pass through the dataset) or Optimizer Step (after a specific number of optimizer steps). If the number of epochs is 1-3, it is recommended to use Optimizer Step, because doing it at epoch level will not give enough insights on how training is performing.

- Recommendation: Optimizer Step is recommended. **

Evaluation Frequency

The numeric interval at which evaluation should occur, based on the selected Level. For example, setting this to 1, evaluates after every single epoch or optimizer step. It is not recommended to evaluate every optimizer step as it can slow down training. Typically, evaluation should occur every 10% - 20% of the training steps in an epoch. This will give enough time to monitor the model's performance and stop training if needed.

- Recommendation: 20% of the training steps in an epoch.

Evaluation Metric

The metric that is used to evaluate the model's performance. Defines the goal for the chosen evaluation metric. Use Minimize for metrics like Loss and Maximize for metrics like Accuracy or F1-score. This logic helps the system prune trials that aren't improving.

- Recommendation: Validation Loss **

Evaluation Metric Direction

The specific performance metric (e.g., Validation Loss, Accuracy) used to make pruning decisions and track the model's progress.

- Recommendation: Minimize (in conjunction with Validation Loss) **

Snapshot

A snapshot is a comprehensive record of the state of the training process. This property defines the moments when the snapshot should be made. Possible values are:

- Evaluation: Every evaluation is eligible for a snapshot. Frequency property determines the frequency of snapshots.

- Metric Improvement: Every time the evaluation metric improves, a snapshot could be taken. Frequency property determines the frequency of snapshots.

Snapshot Frequency

Determines how often full training snapshots (states) are saved for recovery purposes. Increasing this value can reduce overhead if evaluations are happening very frequently.

Quick "Copy-Paste" Cheat Sheet

The following values serve as a reliable starting point for chat objective on instruction tuned models.

| Parameter | Recommended Default | Adjustment Criteria |

|---|---|---|

| Rank (r) | 16 | Increase to 64 if the task is highly complex. |

| LoRA Alpha | 32 | Maintain at 2 * Rank (e.g., if Rank=64, Alpha=128). |

| Learning Rate | 2e-4 | Lower to 5e-5 if the training graph appears unstable. |

| Epochs | 1 | Increase to 3 if there are fewer than 1,000 data examples. |

| Batch Size | 2 (or higher) | Increase until 90% GPU usage is reached. |

| Weight Decay | 0.01 | Maintains stability; usually requires no change. |

| Warmup Step Factor | .05 or .1 | Ensures a smooth training initialization. |