Model Deployment

Model Deployment is the execution layer of Protean AI. It converts registered models into production ready inference services by managing deployment, resource allocation, configuration, and lifecycle control. It abstracts infrastructure complexity while providing fine-grained control over performance, memory usage, startup behavior, and safe, predictable placement on suitable nodes.

Model Deployment provides the following advantages:

- Clear separation between model preparation and model execution

- Automatic VRAM estimation based on deployment configuration

- Intelligent node selection based on resource availability

- Consistent deployment workflow across different model types

- Full lifecycle control without manual infrastructure management

Deployment Configuration

To create a model deployment, you define the deployment characteristics of your model runtime. This includes:

- A name to identify the deployment

- A registered model that you want to deploy, from Model Registry

- The quantized variant of the model to deploy

- The context size used during inference

- The number of parallel processes for concurrent execution

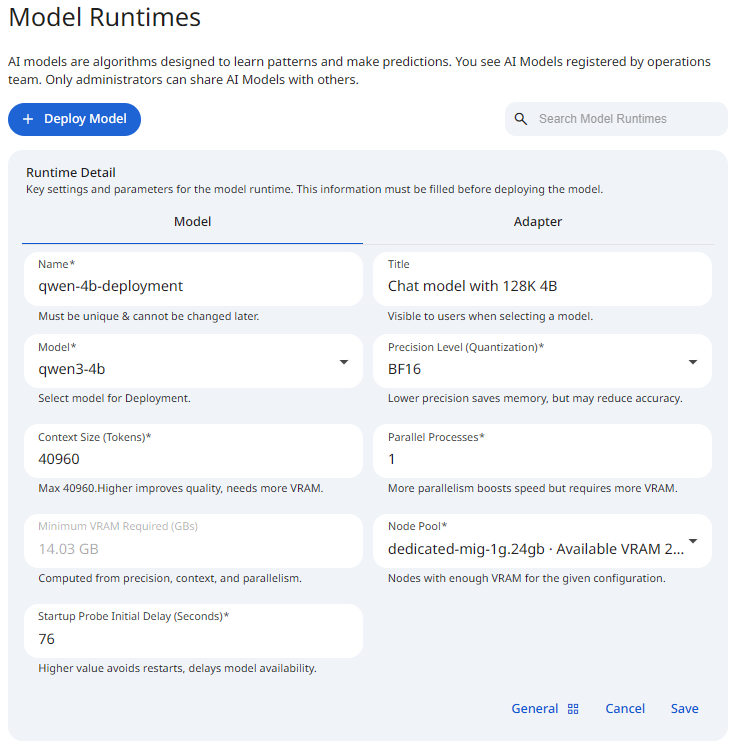

See the screenshot below for an example of Model Deployment configuration.

Snapshot of Protean AI Platform

Snapshot of Protean AI PlatformName

The name is a unique identifier for the model deployment. It is used in OpenAI API calls to identify the deployed model. This name must be unique across all model deployments. It can contain alphanumeric characters and dashes.

Title

The title provides a short and clear identifier for the model deployment. It communicates the model deployment's purpose or architecture in a concise form and enables quick recognition in the list of model deployments.

Model

This field specifies the model to deploy. This can be a model from Model Registry only. At the moment, we do not support deploying models from other sources. This list is populated based on the model the user has access to. If you do not see the model you expect, check that you have access to it.

Precision Level (Quantization)

The precision level specifies the quantization level of the model. This affects the amount of memory required to load the model and the inference speed. Low precision models use fewer bits to represent the model weights, hence they consume less memory and run faster. See Quantization for more information.

Context Size

Context size defines how much information a model can consider at once during inference. It represents the maximum number of tokens (input + generated output) that the model keeps in memory for a single request. It controls the amount of conversation history, document text, or prompt data the model can process in one pass.

Context size has significant impact on VRAM usage. As context size increases:

- More key/value (KV) cache must be stored per request

- Memory consumption grows roughly linearly with context length

- Larger context sizes reduce the number of concurrent requests a model can handle on the same hardware

For large models, KV cache memory can exceed the memory used by the model weights themselves.

Use a larger context size when:

- Processing long documents or multi-page inputs

- Performing retrieval-augmented generation (RAG) with large chunks

Prefer a smaller context size when:

- Latency is critical

- Requests are short and independent

- Maximizing throughput or concurrency is more important than long memory

Choosing the smallest context size that satisfies your use case is the most effective way to control memory usage and increase system efficiency.

Parallel Processes

Parallel processes define how many independent inference workers run simultaneously for a single model deployment. Each process can handle requests independently. It controls the maximum number of concurrent inference requests, hence the throughput of the inference service. Each parallel process loads its own execution state and allocates deployment buffers.

VRAM Required

Context size and parallel processes interact directly:

- Larger context sizes increase memory per request

- More parallel processes multiply that memory usage

A configuration with high context size and high parallelism can exhaust VRAM very quickly, even on high-end hardware.

Protean AI evaluates these parameters at deployment time to:

- Calculate total VRAM requirements

- Prevent unsafe deployments

- Recommend the most suitable execution node

Carefully balancing these two settings is key to achieving optimal performance, cost efficiency, and reliability. Changing any deployment parameter triggers a real-time calculation of the required VRAM. Based on this calculation, Model Deployment recommends a suitable deployment node from the available infrastructure. This ensures:

- Models are only deployed where they fit

- Hardware resources are used efficiently

- Deployment failures due to insufficient memory are avoided

Use a more parallel proceses when:

- Serving multiple concurrent users

- Optimizing for throughput rather than single-request latency

Prefer a fewer parallel processes when:

- Running on memory-constrained hardware

- Serving large-context requests

Node Pool

The node pool specifies the node on which the model deployment will run. This is the node where the model will be loaded and executed. The node pool must have sufficient resources to run the model. The platform recommends a suitable node based on the VRAM requirements.

At the moment, the recommendation does not take into account the current load on the node.

Startup Probe Initial Delay

This allows you to fine-tune how the platform detects readiness for larger models or slower initializations. If the model takes longer than the specified time to load, the platform marks the deployment as unhealthy and tries to restart it.

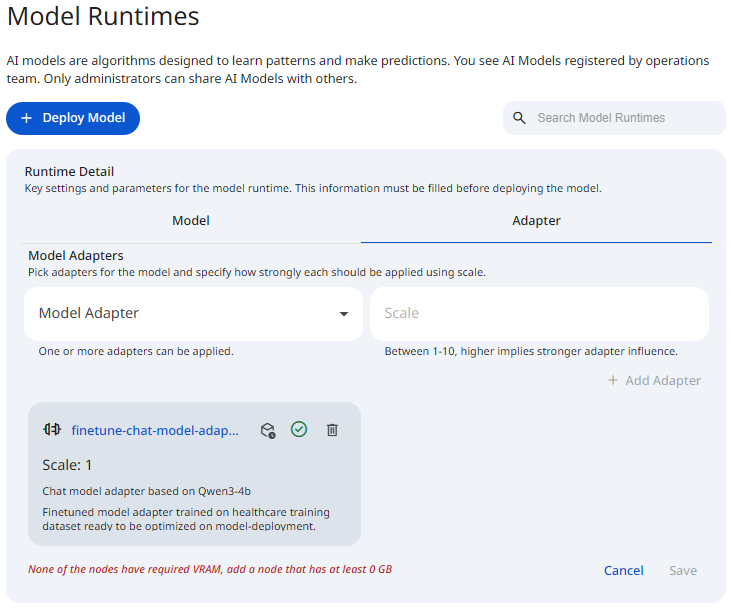

Adapters

Adapters extend the behavior of a base model without modifying its original weights, making it possible to specialize the same model for different use cases by applying different adapters at runtime. The Adapters tab allows you to attach one or more fine-tuned adapters to a model deployment. Adapters are optional. If no adapters are selected, the deployment runs using only the base model.

This section is used to select adapters and control how strongly each adapter influences the deployed model.

- Model Adapter

Select an adapter from the list of available adapters. Multiple adapters can be attached to a single deployment. - Scale

Defines how strongly the adapter is applied at runtime. Higher values result in stronger influence.

Allowed range: 1–10.

See the screenshot below for an example Model Runtime Adapters.

Snapshot of Protean AI Platform

Snapshot of Protean AI PlatformAdd

To attach an adapter to a deployment, open the Adapters tab and select a Model Adapter from the dropdown. Specify a Scale value between 1 and 10 to control the adapter's influence during inference, then click Add Adapter.

Once added, the adapter becomes part of the deployment configuration and is listed below the selector.

Listing

Once added, adapters appear as cards below the selector. Each card displays the adapter identity and the configured Scale value. If no adapters are selected, an empty state is shown indicating that selected adapters will appear in this section.

Remove

Each adapter card includes a Remove action. Removing an adapter detaches it from the deployment configuration and stops applying the adapter once the updated configuration is saved. This operation does not delete the adapter artifacts from storage or remove the adapter from the registry.

- Editing the scale is currently not supported.

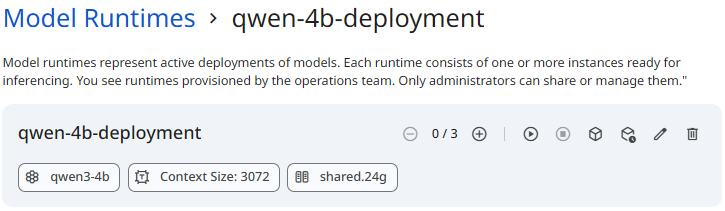

Runtime

Model Runtime provides full lifecycle control over models. You can start, stop, scale, and delete deployments while maintaining visibility into runtime behavior through logs and events.

See the screenshot below for an example of Model Runtime.

Snapshot of Protean AI Platform

Snapshot of Protean AI PlatformStart

Starting a model runtime initializes the deployment and loads the model onto the selected execution nodes. When a runtime is started, the following actions occur:

- Compute resources are allocated

- The model is loaded into memory

- Runtime processes are initialized

- Health and readiness checks begin

Once started, the model becomes available for inference requests.

Stop

Stopping a model runtime gracefully shuts down all running instances. When a runtime is stopped, the following actions occur:

- Inference requests are terminated or drained

- Runtime processes are stopped

- Allocated resources are released

Stopped runtimes retain their deployment configuration and can be restarted without recreating deployment configurations. Stopped instances do not consume any additional compute resources, and do not restart automatically. However, if a running instance becomes unhealthy and has terminated, it will be restarted automatically.

Scale

Scaling controls how many runtime instances are active for a deployment. Scaling can be adjusted at any time while the runtime is running.

Scale up

Scaling up increases the number of active instances. Use scale up when:

- Request volume increases

- Latency rises under load

- Higher availability is required

Scale down

Scaling down reduces the number of active instances. Use scale down when:

- Traffic decreases

- Optimizing for cost efficiency

- Reducing resource consumption

Scaling operations are applied dynamically without requiring model reconfiguration.

Delete

Deleting a model runtime permanently removes the deployment. When a runtime is deleted, all associated running instances are stopped and deployment configuration is deleted. The underlying model registration artifacts remain available in the Model Registry and can be redeployed at any time. Deletion does not delete the underlying model adapter artifacts.

- Model can only be deleted if it is not being used by any Assistant or Agent.

Deletion is irreversible. Model instances and deployment configuration can't be recovered.

Logs and Events

Logs and events provide operational visibility into runtimes. They help you monitor execution, diagnose failures, and understand how a deployment behaves over time at both instance and deployment scope.

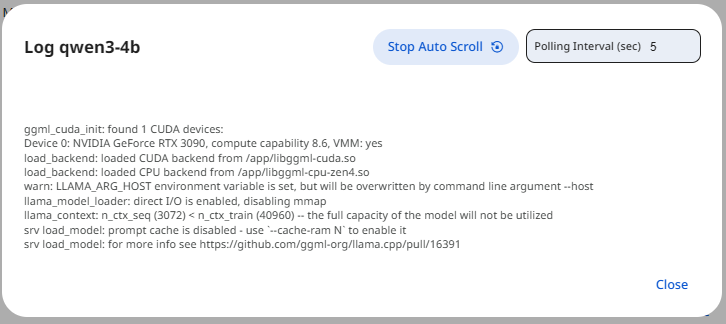

Logs

Runtime provides detailed observability to help diagnose issues and understand runtime behavior. Logs can be accessed at instance levels, individual runtime instances. Logs include inference errors and warnings

See the screenshot below for an example of Model Runtime Logs.

Snapshot of Protean AI Platform

Snapshot of Protean AI PlatformEvents

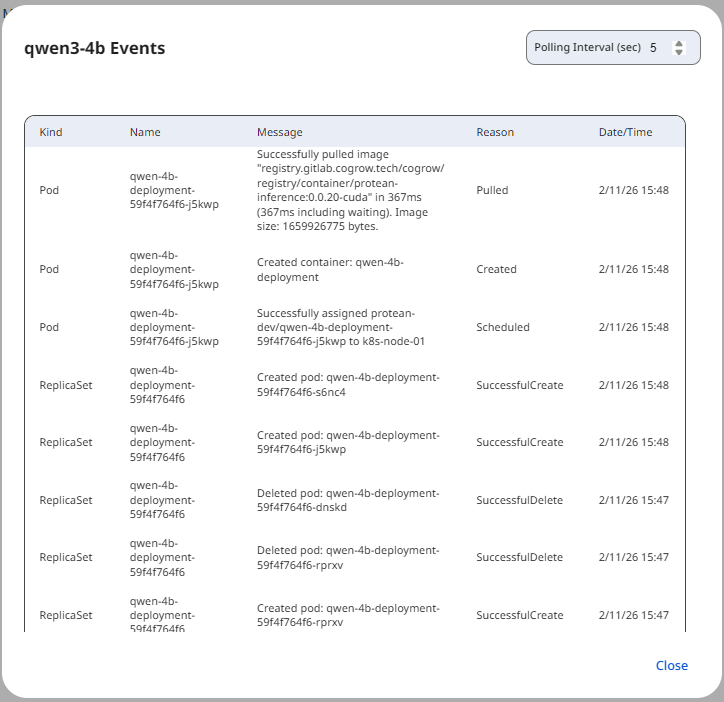

Events provide a structured view of significant runtime actions and state transitions. Events are organized into two categories:

- Instance-level events show lifecycle and execution events for a specific instance

- Deployment-level events summarize changes affecting the entire runtime

See the screenshot below for an example of Model Runtime Events.

Snapshot of Protean AI Platform

Snapshot of Protean AI PlatformEvents include:

- Scaling operations

- Scheduling decisions

- Health and readiness state changes

Access Control

Access Control in Protean AI governs who can view, create, modify, and operate resources across the platform. It is designed for enterprise environments where security, isolation, and governance are mandatory.

Protean AI follows a principle of least privilege, ensuring users and systems are granted only the permissions required to perform their tasks.

| Role→ Action↓ | Admin | Model Admin | User | Owner | Viewer | Description |

|---|---|---|---|---|---|---|

| Create | Yes | Yes | No | NA | NA | Create a model deployment. |

| Read | Yes | Yes | No | Yes | Yes | Inference a model runtime. |

| Update | Yes | Yes | No | Yes | No | Modify model deployment characterstics. Start, Stop & Scale model runtime. |

| Delete | Yes | Yes | No | Yes | No | Delete model deployment & runtime and remove it from the system. |

| Manage Access | Yes | Yes | No | Yes | No | Grant or revoke permissions for users and groups. |

- Deleting a model from the registry also deletes the model artifacts from the storage.

- Model can only be deleted if there are no runtime instances deployed for it and it is not being used in Finetuning.

Workflow

- Select a model from Model Registry.

- Choose the quantized variant to deploy.

- Configure context size and parallel processes.

- Review the calculated VRAM requirement and node recommendation.

- (Optional) Configure advanced startup settings.

- Save the deployment configuration to create the deployment.

- This will create a deployment resource in the system with '0' desired instances.

- Scale up the deployment to start instances.

Result

After the deployment is created, it can be started in a runtime, this is when it is loaded onto the selected node and becomes available for inference. With start, stop, scaling, and deletion controls, Model Runtime provides operational flexibility without sacrificing visibility. Combined with instance-level logs and events, allows operating the model with confidence in production while maintaining reliability, efficiency, and control.